Find the Moving Average of This Year Agains the Avrage of the Last G Years

Smoothing of a noisy sine (blue curve) with a moving average (ruby-red curve).

In statistics, a moving average (rolling average or running average) is a calculation to analyze information points by creating a series of averages of different subsets of the full data gear up. It is also chosen a moving mean (MM)[1] or rolling mean and is a type of finite impulse response filter. Variations include: uncomplicated, cumulative, or weighted forms (described beneath).

Given a series of numbers and a fixed subset size, the first element of the moving boilerplate is obtained by taking the average of the initial fixed subset of the number serial. So the subset is modified by "shifting frontwards"; that is, excluding the get-go number of the series and including the side by side value in the subset.

A moving average is commonly used with time series data to polish out short-term fluctuations and highlight longer-term trends or cycles. The threshold between brusque-term and long-term depends on the application, and the parameters of the moving boilerplate volition exist set appropriately. For instance, it is frequently used in technical analysis of financial information, like stock prices, returns or trading volumes. It is also used in economics to examine gross domestic product, employment or other macroeconomic time series. Mathematically, a moving average is a type of convolution and so it can exist viewed as an instance of a low-pass filter used in signal processing. When used with not-time serial data, a moving average filters college frequency components without any specific connection to time, although typically some kind of ordering is implied. Viewed simplistically it tin can exist regarded every bit smoothing the information.

Elementary moving average [edit]

In financial applications a elementary moving average (SMA) is the unweighted mean of the previous data-points. However, in scientific discipline and technology, the mean is usually taken from an equal number of data on either side of a central value. This ensures that variations in the mean are aligned with the variations in the data rather than being shifted in fourth dimension. An example of a elementary as weighted running hateful is the mean over the terminal entries of a data-gear up containing entries. Let those data-points exist . This could be endmost prices of a stock. The mean over the last data-points (days in this example) is denoted equally and calculated as:

When computing the next mean with the same sampling width the range from to is considered. A new value comes into the sum and the oldest value drops out. This simplifies the calculations by reusing the previous hateful .

This means that the moving boilerplate filter can be computed quite cheaply on real time information with a FIFO / circular buffer and only 3 arithmetic steps.

During the initial filling of the FIFO / circular buffer the sampling window is equal to the data-gear up size thus and the boilerplate calculation is performed every bit a cumulative moving average.

The period selected ( ) depends on the type of movement of interest, such as short, intermediate, or long-term. In financial terms, moving-average levels tin be interpreted as support in a falling marketplace or resistance in a rising market place.

If the information used are not centered around the mean, a unproblematic moving boilerplate lags behind the latest datum by half the sample width. An SMA can also be unduly influenced past old data dropping out or new information coming in. 1 feature of the SMA is that if the data has a periodic fluctuation, then applying an SMA of that period volition eliminate that variation (the boilerplate always containing one consummate cycle). Merely a perfectly regular cycle is rarely encountered.[2]

For a number of applications, information technology is advantageous to avoid the shifting induced by using only "past" data. Hence a fundamental moving average tin be computed, using data equally spaced on either side of the point in the serial where the mean is calculated.[3] This requires using an odd number of points in the sample window.

A major drawback of the SMA is that it lets through a meaning amount of the signal shorter than the window length. Worse, it actually inverts information technology. This tin lead to unexpected artifacts, such as peaks in the smoothed result appearing where at that place were troughs in the data. It too leads to the result existence less smooth than expected since some of the higher frequencies are not properly removed.

Cumulative moving boilerplate [edit]

In a cumulative moving boilerplate (CMA), the data arrive in an ordered datum stream, and the user would like to get the average of all of the data upwardly until the current datum. For example, an investor may want the average price of all of the stock transactions for a particular stock up until the current time. As each new transaction occurs, the boilerplate price at the time of the transaction tin be calculated for all of the transactions up to that betoken using the cumulative average, typically an equally weighted average of the sequence of north values up to the electric current time:

The creature-force method to summate this would be to store all of the data and calculate the sum and dissever by the number of points every time a new datum arrived. However, it is possible to simply update cumulative boilerplate every bit a new value, becomes available, using the formula

Thus the current cumulative average for a new datum is equal to the previous cumulative average, times northward, plus the latest datum, all divided past the number of points received and then far, n+1. When all of the data arrive ( due north = Due north ), then the cumulative boilerplate will equal the final boilerplate. It is also possible to store a running total of the data as well as the number of points and dividing the total by the number of points to go the CMA each fourth dimension a new datum arrives.

The derivation of the cumulative boilerplate formula is straightforward. Using

and similarly for northward + 1, it is seen that

Solving this equation for results in

Weighted moving average [edit]

A weighted average is an average that has multiplying factors to give different weights to information at different positions in the sample window. Mathematically, the weighted moving average is the convolution of the information with a fixed weighting part. One application is removing pixelization from a digital graphical image.[ citation needed ]

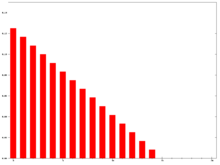

In technical analysis of fiscal data, a weighted moving average (WMA) has the specific meaning of weights that decrease in arithmetical progression.[4] In an n-24-hour interval WMA the latest day has weight n, the second latest , etc., down to ane.

The denominator is a triangle number equal to In the more than general case the denominator will always be the sum of the private weights.

When calculating the WMA across successive values, the difference betwixt the numerators of and is . If nosotros denote the sum past , so

The graph at the correct shows how the weights decrease, from highest weight for the most recent data, downward to zero. Information technology can be compared to the weights in the exponential moving boilerplate which follows.

Exponential moving average [edit]

An exponential moving average (EMA), also known as an exponentially weighted moving average (EWMA),[5] is a first-order infinite impulse response filter that applies weighting factors which decrease exponentially. The weighting for each older datum decreases exponentially, never reaching null. The graph at right shows an case of the weight decrease.

The EMA for a series may be calculated recursively:

Where:

Southward 1 may be initialized in a number of unlike means, nearly ordinarily by setting S 1 to Y one as shown higher up, though other techniques exist, such equally setting S 1 to an boilerplate of the outset 4 or 5 observations. The importance of the S 1 initialization'south outcome on the resultant moving average depends on ; smaller values make the option of S 1 relatively more important than larger values, since a higher discounts older observations faster.

Whatever is done for Southward 1 it assumes something about values prior to the available data and is necessarily in error. In view of this, the early results should be regarded every bit unreliable until the iterations have had time to converge. This is sometimes called a 'spin-up' interval. One fashion to assess when information technology tin can exist regarded every bit reliable is to consider the required accurateness of the result. For instance, if three% accurateness is required, initialising with Y one and taking data after five time constants (defined above) will ensure that the calculation has converged to within 3% (just <three% of Y one volition remain in the upshot). Sometimes with very pocket-sized alpha, this can mean piddling of the result is useful. This is analogous to the problem of using a convolution filter (such as a weighted average) with a very long window.

This conception is according to Hunter (1986).[6] By repeated application of this formula for different times, nosotros tin somewhen write St every bit a weighted sum of the datum points , every bit:

for any suitable grand ∈ {0, 1, 2, ...} The weight of the general datum is .

This formula can also be expressed in technical analysis terms every bit follows, showing how the EMA steps towards the latest datum, but only by a proportion of the difference (each time):

Expanding out each time results in the following power serial, showing how the weighting factor on each datum p 1, p ii, etc., decreases exponentially:

where

since .

It can besides exist calculated recursively without introducing the error when initializing the first estimate (north starts from 1):

- Assume

This is an infinite sum with decreasing terms.

Approximating the EMA with a limited number of terms [edit]

The question of how far back to become for an initial value depends, in the worst example, on the data. Big price values in old data will bear on the total even if their weighting is very minor. If prices accept small variations and then just the weighting can exist considered. The power formula higher up gives a starting value for a detail twenty-four hours, after which the successive days formula shown first tin be applied. The weight omitted by stopping after k terms is

which is

i.eastward. a fraction

- [7]

out of the total weight.

For case, to have 99.ix% of the weight, set above ratio equal to 0.i% and solve for thou:

to determine how many terms should exist used. Since equally , nosotros know approaches as N increases .[8] This gives:

When is related to N as , this simplifies to approximately[9]

for this example (99.nine% weight).

Relationship between SMA and EMA [edit]

Annotation that there is no "accepted" value that should be chosen for , although there are some recommended values based on the application. A normally used value for α is . This is because the weights of an SMA and EMA have the same "centre of mass" when .

[Proof]

The weights of an N-day SMA accept a "center of mass" on the 24-hour interval, where

(or , if we utilise aught-based indexing)

For the remainder of this proof we will utilise one-based indexing.

Now meanwhile, the weights of an EMA have center of mass

That is,

We as well know the Maclaurin Series

Taking derivatives of both sides with respect to x gives:

or

Substituting , nosotros get

or

And then the value of α that sets is, in fact:

or

And and then is the value of α that creates an EMA whose weights have the same center of gravity as would the equivalent N-mean solar day SMA

This is also why sometimes an EMA is referred to as an N-day EMA. Despite the name suggesting there are N periods, the terminology only specifies the α factor. N is non a stopping point for the calculation in the way it is in an SMA or WMA. For sufficiently large N, the outset N datum points in an EMA represent almost 86% of the total weight in the calculation when :

The designation of is not a requirement. (For example, a like proof could be used to just as hands make up one's mind that the EMA with a half-life of N-days is or that the EMA with the same median every bit an Due north-day SMA is ). In fact, ii/(Due north+1) is merely a common convention to form an intuitive understanding of the relationship betwixt EMAs and SMAs, for industries where both are ordinarily used together on the same datasets. In reality, an EMA with whatsoever value of α can be used, and can be named either by stating the value of α, or with the more familiar Northward-day EMA terminology letting .

Exponentially weighted moving variance and standard deviation [edit]

In improver to the mean, we may also exist interested in the variance and in the standard deviation to evaluate the statistical significance of a deviation from the mean.

EWMVar can be computed hands along with the moving average. The starting values are and , and we then compute the subsequent values using:[13]

From this, the exponentially weighted moving standard divergence can be computed as . Nosotros can then use the standard score to normalize data with respect to the moving average and variance. This algorithm is based on Welford'due south algorithm for computing the variance.

Modified moving average [edit]

A modified moving average (MMA), running moving average (RMA), or smoothed moving average (SMMA) is defined as:

In short, this is an exponential moving average, with . The only difference betwixt EMA and SMMA/RMA/MMA is how is computed from . For EMA the customary selection is

Application to measuring computer performance [edit]

Some calculator functioning metrics, due east.g. the boilerplate process queue length, or the average CPU utilization, use a form of exponential moving boilerplate.

Here α is defined as a function of time between two readings. An instance of a coefficient giving bigger weight to the current reading, and smaller weight to the older readings is

where exp() is the exponential function, time for readings t due north is expressed in seconds, and W is the catamenia of fourth dimension in minutes over which the reading is said to be averaged (the mean lifetime of each reading in the average). Given the above definition of α, the moving average tin can be expressed as

For case, a 15-minute average L of a process queue length Q, measured every v seconds (fourth dimension departure is 5 seconds), is computed as

Other weightings [edit]

Other weighting systems are used occasionally – for example, in share trading a volume weighting will weight each fourth dimension period in proportion to its trading volume.

A further weighting, used past actuaries, is Spencer's 15-Bespeak Moving Average[14] (a central moving boilerplate). Its symmetric weight coefficients are [−3, −half dozen, −v, 3, 21, 46, 67, 74, 67, 46, 21, 3, −5, −6, −3], which factors as [1, 1, one, 1] ×[1, 1, 1, i] ×[1, i, 1, 1, 1] ×[−three, 3, four, iii, −3] / 320 and leaves samples of any cubic polynomial unchanged.[xv]

Exterior the world of finance, weighted running means have many forms and applications. Each weighting function or "kernel" has its own characteristics. In applied science and science the frequency and phase response of the filter is oft of primary importance in understanding the desired and undesired distortions that a particular filter volition apply to the information.

A mean does not just "smooth" the data. A mean is a course of low-pass filter. The effects of the particular filter used should be understood in order to make an appropriate option. On this point, the French version of this commodity discusses the spectral effects of 3 kinds of means (cumulative, exponential, Gaussian).

Moving median [edit]

From a statistical bespeak of view, the moving average, when used to approximate the underlying trend in a time serial, is susceptible to rare events such every bit rapid shocks or other anomalies. A more robust gauge of the tendency is the elementary moving median over north fourth dimension points:

where the median is institute by, for instance, sorting the values inside the brackets and finding the value in the centre. For larger values of n, the median can be efficiently computed past updating an indexable skiplist.[sixteen]

Statistically, the moving average is optimal for recovering the underlying tendency of the time series when the fluctuations about the trend are unremarkably distributed. Nonetheless, the normal distribution does not identify high probability on very large deviations from the tendency which explains why such deviations will have a unduly large issue on the trend judge. It tin can be shown that if the fluctuations are instead assumed to be Laplace distributed, and then the moving median is statistically optimal.[17] For a given variance, the Laplace distribution places college probability on rare events than does the normal, which explains why the moving median tolerates shocks improve than the moving hateful.

When the simple moving median above is cardinal, the smoothing is identical to the median filter which has applications in, for instance, image signal processing.

Moving average regression model [edit]

In a moving average regression model, a variable of interest is causeless to exist a weighted moving average of unobserved independent error terms; the weights in the moving average are parameters to be estimated.

Those two concepts are ofttimes confused due to their name, but while they share many similarities, they represent distinct methods and are used in very different contexts.

Run into besides [edit]

- Exponential smoothing

- Moving boilerplate convergence/divergence indicator

- Window function

- Moving average crossover

- Ascension moving average

- Rolling hash

- Running total

- Local regression (LOESS and LOWESS)

- Kernel smoothing

- Moving least squares

- Savitzky–Golay filter

- Aught lag exponential moving average

Notes and references [edit]

- ^ Hydrologic Variability of the Cosumnes River Floodplain (Booth et al., San Francisco Estuary and Watershed Scientific discipline, Volume 4, Effect two, 2006)

- ^ Statistical Analysis, Ya-lun Chou, Holt International, 1975, ISBN 0-03-089422-0, section 17.9.

- ^ The derivation and properties of the simple central moving average are given in full at Savitzky–Golay filter.

- ^ "Weighted Moving Averages: The Basics". Investopedia.

- ^ "Archived copy". Archived from the original on 2010-03-29. Retrieved 2010-10-26 .

{{cite web}}: CS1 maint: archived copy as title (link) - ^ NIST/SEMATECH e-Handbook of Statistical Methods: Single Exponential Smoothing at the National Constitute of Standards and Technology

- ^ The Maclaurin Series for is

- ^ Information technology means , and the Taylor serial of approaches .

- ^ logeast(0.001) / two = −3.45

- ^ Encounter the post-obit link for a proof.

- ^ The denominator on the left-hand side should exist unity, and the numerator will become the right-paw side (geometric series), .

- ^ Because (1 +x/northward) n tends to the limit east 10 for large n.

- ^ Finch, Tony. "Incremental calculation of weighted mean and variance" (PDF). Academy of Cambridge . Retrieved 19 December 2019.

- ^ Spencer'southward 15-Point Moving Average — from Wolfram MathWorld

- ^ Rob J Hyndman. "Moving averages". 2009-11-08. Accessed 2020-08-20.

- ^ "Efficient Running Median using an Indexable Skiplist « Python recipes « ActiveState Lawmaking".

- ^ G.R. Arce, "Nonlinear Betoken Processing: A Statistical Approach", Wiley:New Jersey, USA, 2005.

External links [edit]

bennettfighad1967.blogspot.com

Source: https://en.wikipedia.org/wiki/Moving_average

![{\displaystyle {\begin{aligned}x_{n+1}&=(x_{1}+\cdots +x_{n+1})-(x_{1}+\cdots +x_{n})\\[6pt]\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4198137da4158776d97b63e5924acff1fa5460da)

![{\displaystyle {\begin{aligned}{\textit {CMA}}_{n+1}&={x_{n+1}+n\cdot {\textit {CMA}}_{n} \over {n+1}}\\[6pt]&={x_{n+1}+(n+1-1)\cdot {\textit {CMA}}_{n} \over {n+1}}\\[6pt]&={(n+1)\cdot {\textit {CMA}}_{n}+x_{n+1}-{\textit {CMA}}_{n} \over {n+1}}\\[6pt]&={{\textit {CMA}}_{n}}+{{x_{n+1}-{\textit {CMA}}_{n}} \over {n+1}}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/ac31c1a45a506a2f7e97c0887afc19b83da137cd)

![{\displaystyle {\begin{aligned}{\text{Total}}_{M+1}&={\text{Total}}_{M}+p_{M+1}-p_{M-n+1}\\[3pt]{\text{Numerator}}_{M+1}&={\text{Numerator}}_{M}+np_{M+1}-{\text{Total}}_{M}\\[3pt]{\text{WMA}}_{M+1}&={{\text{Numerator}}_{M+1} \over n+(n-1)+\cdots +2+1}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/8ff41461f4d60351300c4ef7cee5f75821fed5ab)

![{\displaystyle {\begin{aligned}S_{t}=\alpha &\left[Y_{t}+(1-\alpha )Y_{t-1}+(1-\alpha )^{2}Y_{t-2}+\cdots \right.\\[6pt]&\left.\cdots +(1-\alpha )^{k}Y_{t-k}\right]+(1-\alpha )^{k+1}S_{t-(k+1)}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/a2010e0f9130c03db3bccc10401d09ad14d490e5)

![{\displaystyle {\text{EMA}}_{\text{today}}={\text{EMA}}_{\text{yesterday}}+\alpha \left[{\text{price}}_{\text{today}}-{\text{EMA}}_{\text{yesterday}}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f5618cac038375bf2832f8ce6a42917e5a7464e9)

![{\displaystyle {\text{EMA}}_{\text{today}}={\alpha \left[p_{1}+(1-\alpha )p_{2}+(1-\alpha )^{2}p_{3}+(1-\alpha )^{3}p_{4}+\cdots \right]}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/92eb7704cddb522915f9e484af573a66e11d2400)

![{\displaystyle \alpha \left[(1-\alpha )^{k}+(1-\alpha )^{k+1}+(1-\alpha )^{k+2}+\cdots \right],}](https://wikimedia.org/api/rest_v1/media/math/render/svg/bf74123f6ed7d1ba9a526b0fe36838ba9c850417)

![{\displaystyle \alpha (1-\alpha )^{k}\left[1+(1-\alpha )+(1-\alpha )^{2}+\cdots \right],}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1dbfe024eb53506e6e9a9a4d834af5f0edfcafd3)

![{\displaystyle {\begin{aligned}&{\frac {{\text{weight omitted by stopping after }}k{\text{ terms}}}{\text{total weight}}}\\[6pt]={}&{\frac {\alpha \left[(1-\alpha )^{k}+(1-\alpha )^{k+1}+(1-\alpha )^{k+2}+\cdots \right]}{\alpha \left[1+(1-\alpha )+(1-\alpha )^{2}+\cdots \right]}}\\[6pt]={}&{\frac {\alpha (1-\alpha )^{k}{\frac {1}{1-(1-\alpha )}}}{\frac {\alpha }{1-(1-\alpha )}}}\\[6pt]={}&(1-\alpha )^{k}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/7460819a37ee41817b79d3078939358ade59f9bd)

![{\displaystyle R_{\mathrm {EMA} }=\alpha \left[1+2(1-\alpha )+3(1-\alpha )^{2}+...+k(1-\alpha )^{k-1}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1fff0d58c6b00ffbbc98e20d2405b149d1a8ece9)

![{\displaystyle S_{n}=\alpha (t_{n}-t_{n-1})Y_{n}+\left[1-\alpha (t_{n}-t_{n-1})\right]S_{n-1}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5f02fa54d8899c48a023c97613336e3b202092b8)

![{\displaystyle S_{n}=\left[1-\exp \left(-{{t_{n}-t_{n-1}} \over {W\cdot 60}}\right)\right]Y_{n}+\exp \left(-{{t_{n}-t_{n-1}} \over {W\cdot 60}}\right)S_{n-1}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/489654cce07841be14e0b10ed8e3146dc6b6da6e)

![{\displaystyle {\begin{aligned}L_{n}&=\left[1-\exp \left({-{\frac {5}{15\cdot 60}}}\right)\right]Q_{n}+e^{-{\frac {5}{15\cdot 60}}}L_{n-1}\\[6pt]&=\left[1-\exp \left({-{\frac {1}{180}}}\right)\right]Q_{n}+e^{-{\frac {1}{180}}}L_{n-1}\\[6pt]&=Q_{n}+e^{-{\frac {1}{180}}}\left(L_{n-1}-Q_{n}\right)\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9da1772372b3d06e6a7141d79c687c8ae078fc37)

![{\displaystyle \alpha \left[{1-(1-\alpha )^{N} \over 1-(1-\alpha )}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d7abb88caa8f22d1c01a37c11756f6b43969faa8)

0 Response to "Find the Moving Average of This Year Agains the Avrage of the Last G Years"

Postar um comentário